Security teams are collecting more telemetry than ever, but the value they get from it hasn’t scaled at the same rate. Infrastructure has become more distributed, systems more complex, and the volume and variety of data harder to reason about, especially during investigations.

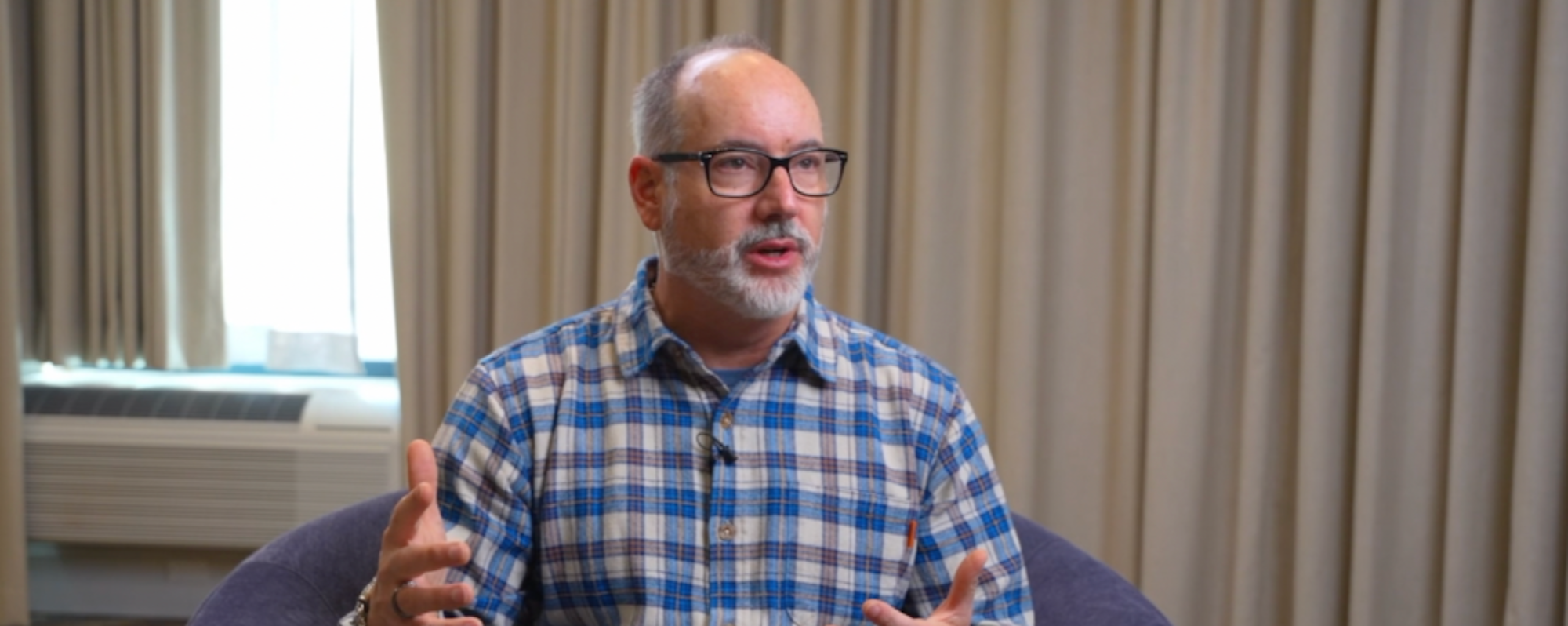

In this conversation, Rick Doten, former Healthplan CISO at Centene and longtime security leader, reflects on how logging, telemetry, and security decision-making are evolving – and why many of today’s challenges are less about tools and more about mindset.

You’ve spent time on both the offensive and governance sides of security. What shaped how you think about data and risk?

One moment that really stuck with me was when I was running ethical hacking teams about twenty years ago. Someone asked for a privacy assessment, and I remember thinking, "privacy is confidentiality, right?" I’m a hacker, what do I know?

But I got schooled pretty quickly. Privacy isn’t just about whether data leaks. It’s about appropriate use, consent, regulation. That really changed how I thought about data. It’s not just “can I break this,” it’s "what kind of data is this, how is it supposed to be used, and what happens if it’s misused?"

That was probably the first time I really started thinking about data as part of a broader ecosystem – governance, protection, impact – not just as something you defend or exploit.

What’s changing most rapidly in the threat landscape right now?

The complexity. When I started, things were simple. You had a website, an app server, a database. Then we moved into service-oriented architectures, and now everything is cloud, highly distributed, and increasingly automated. We’ve got AI agents doing things now.

All of that produces a lot more data. Not just more attack surface, but more places where data ends up (logs, memory, APIs) including sensitive data you might not even realize is there. And I think a lot of organizations don’t really understand how complex their platforms have become.

It’s not just the volume of data. It’s the volume of transactions and interactions. Figuring out how to protect all of that is genuinely hard.

Where does logging and telemetry fit into a modern security program today?

Traditionally, the model was pretty clear. Tools generate telemetry, you aggregate it – usually into a SIEM – run detections, and have humans respond. That worked well for a long time.

But with the scale and complexity we’re dealing with now, that approach starts to fall apart. Even just from a timing standpoint. And in cloud environments, moving data around costs money.

So I think we need to be more deliberate about how telemetry is handled. Some things need to be detected close to where they happen. Some things might just go to ticketing. Some might go to analysis. Some might go to cold storage because you don’t actually need them right away.

The point is that not all data needs to be treated the same. And in that sense, security is catching up to how data scientists have been thinking about data for a long time.

If the tools exist, why isn’t every security team doing this?

A lot of it is just inertia. What teams are doing today still works. It’s comfortable. And when budgets are tight, people start asking, is this really the thing that moves the needle the most? Or do we have bigger gaps somewhere else?

So even if you know there might be a better way to handle telemetry, it’s hard to justify changing something that isn’t obviously broken, especially when there are other areas that feel more urgent.

What have you seen make a difference when teams do start changing how they work with telemetry?

Usually it starts small. Cost savings are often the first hook. Reducing duplication, cutting down ingestion, things like that.

But what really changes minds is when people see the operational impact. Getting detections earlier. Starting response sooner. Reducing how much manual work humans have to do during an investigation.

Even if it’s just in one area, like endpoint data, once teams see that improvement – faster, more complete, better quality – it becomes easier to build on it.

What do you wish security leaders understood better about telemetry and data?

I think we still underestimate how much of this is a data science problem.

Security often gets framed as “find bad things, stop bad things.” But the intelligence you get out of telemetry is useful for a lot more than that. Mature organizations use it to understand impact, attack paths, and priorities.

Instead of reacting to individual alerts, they’re looking at patterns and trends. That’s a very different way of thinking than just whack-a-mole, and it requires treating telemetry as something you analyze, not just something you store.

How does AI fit into this picture?

I think AI can be really helpful if you think about it the right way. I like to describe it as having an unlimited number of interns with unlimited time.

Machines are very good at executing instructions quickly and consistently. Humans are bad at that. But humans are good at dealing with ambiguity and context.

AI can help enrich data, pull in additional context, make correlations, even ask questions of systems through APIs that logs alone don’t capture. And it can do that at scale and at speed, which helps support the humans doing the harder judgment calls.

Will AI make logs more or less important as a security control?

I think it changes how we think about them. Even the word “log” feels a bit archaic at this point.

What we’re really talking about is information coming out of systems that act like sensors. That information might be in a log, or it might come from an API, or somewhere else entirely.

If we shift the mindset from “log management” to “managing information from systems,” I think it opens up better ways of thinking about how to use it.

One final piece of advice for leaders trying to scale telemetry the right way?

I think it’s recognizing that the old model – everything goes into a SIEM and you deal with it later – isn’t going to scale.

Adversaries are already using data science and AI. Defenders need to evolve their thinking too. Not just about how to collect data more efficiently, but how to use it in a way that actually supports better decisions.