Simplify your security data pipeline

Beacon replaces custom-built plumbing and sprawling pipelines with a single, security-native SecDataOps layer that is easier to operate, easier to change, and built to scale with modern security teams and AI workflows.

Show me howBetter Security Starts with Simpler, Smarter SecDataOps

Replace fragile custom plumbing

Eliminate the sprawl of scripts, collectors, filters, and ad hoc transformations that silently break and require constant maintenance.

Reduce ongoing engineering load

Beacon absorbs the operational complexity of scaling, error handling, schema changes, and routing so security engineers can focus on detections (not data flow).

Make changes quickly and safely

Add sources, update schemas, route data to new tools, or support AI agents without rebuilding pipelines or risking downtime.

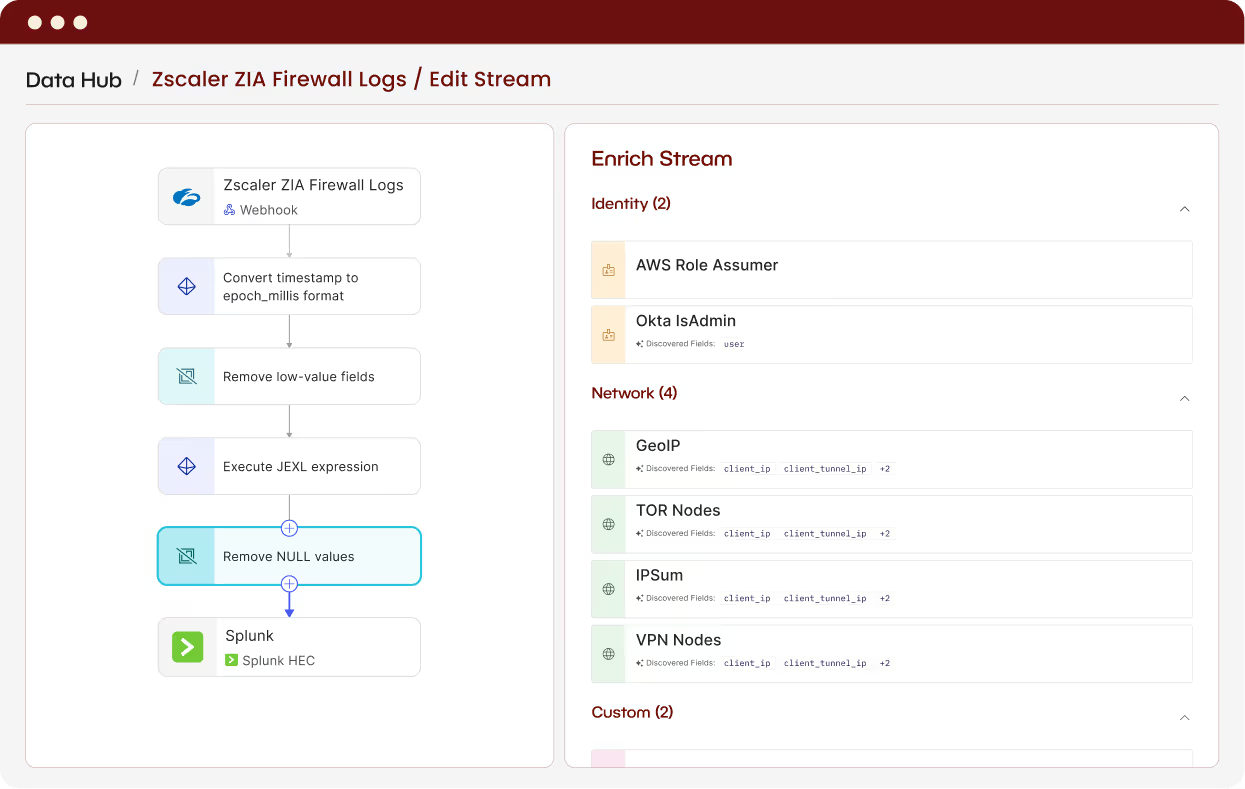

What a Simplified Security Data Pipeline Looks Like

.png)

.png)

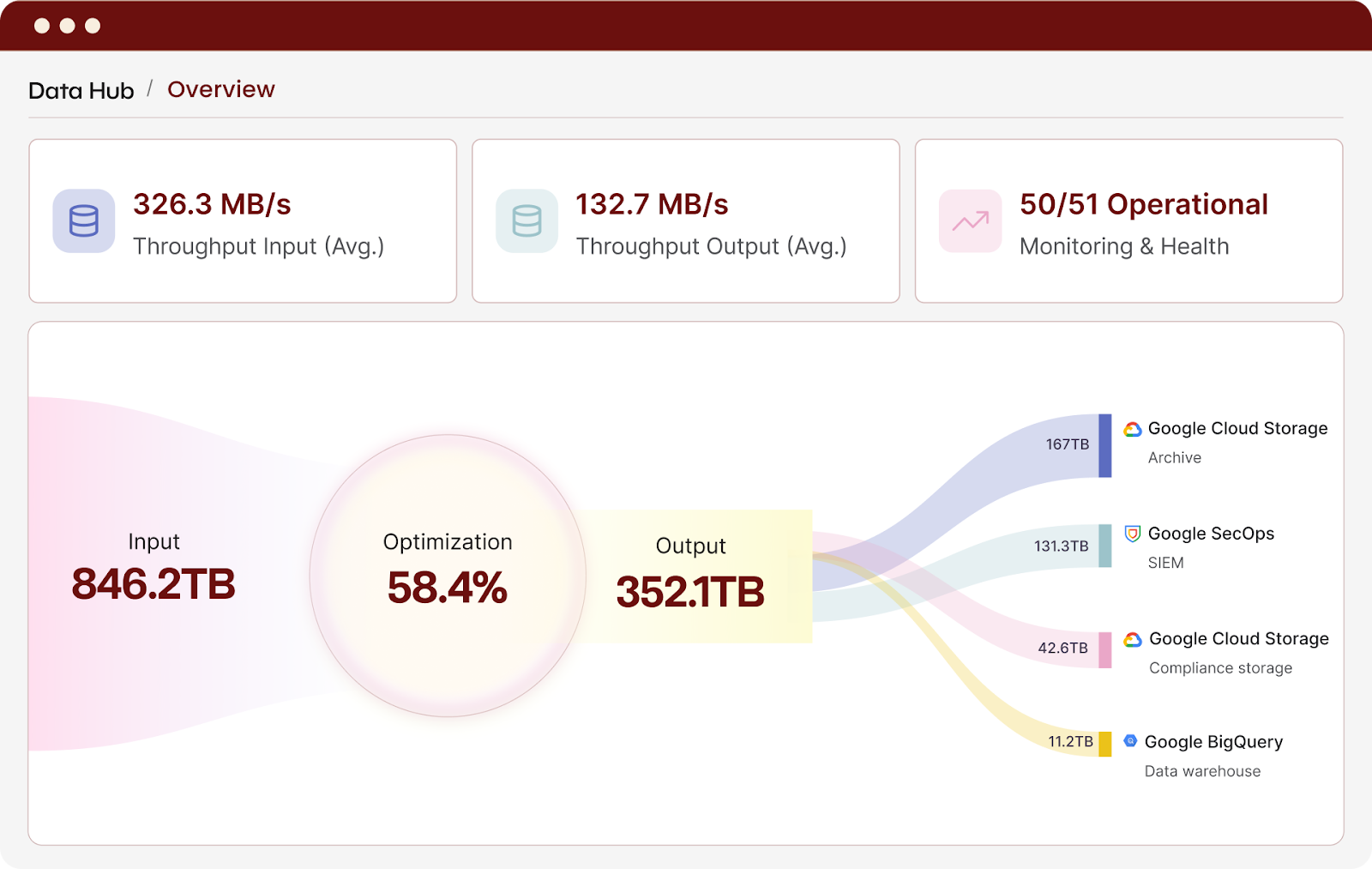

Beacon's security-driven data platform optimizes terabytes of important security logs spanning many sources. Data arrives enriched and normalized, and in the case of bloated VPC flow logs, reduced to 5% of their original size, enabling our security team and AI workflows to act immediately and effectively. We no longer choose between coverage and cost efficiency. We now have both, supported by a responsive team of security data experts.

Frequently Asked Questions

Pipeline simplification means reducing the number of tools, scripts, and custom integrations required to move security data from sources to destinations, while improving reliability, visibility, and flexibility through strong SecDataOps practices.

Beacon provides a dedicated SecDataOps layer that standardizes how security data is collected, normalized, enriched, and delivered to downstream tools. Instead of relying on custom pipelines and one-off integrations, teams use Beacon to operate and evolve their security data flows in a consistent, reliable way. This reduces operational overhead while improving data quality and confidence.

Beacon replaces custom-built log forwarding scripts, generic ETL and log routing tools, tool-specific collectors and transformations, and ad hoc filters used to manage SIEM cost.

All of this is consolidated into a single, security-native pipeline.

Generic pipelines move data but don’t understand it. Beacon is security-first, built with detection logic, investigation needs, and attacker behaviors in mind. Optimization, normalization, and enrichment are designed specifically for security outcomes.

No, it increases it. Beacon decouples data sources from destinations, making it easier to change SIEMs, add new tools, or adopt AI agents without re-engineering pipelines.

Beacon includes self-healing pipelines, exactly-once delivery, late-arrival handling, and continuous health monitoring. This reduces silent data loss, broken integrations, and incident-time surprises.

Yes. Beacon is built to operate at petabyte scale, supporting multi-cloud, multi-region deployments and 100+ TB/day workloads with enterprise-grade reliability.

Simpler pipelines produce cleaner, more consistent data. Analysts and AI agents spend less time troubleshooting missing or malformed data and more time investigating, reducing MTTR and improving confidence in outcomes.

Most teams consolidate major portions of their pipeline within days to weeks, thanks to Beacon’s pre-built connectors, Recipes, and production-ready infrastructure.